I try to do a server restore test once a year. Previous tests are blogged in 2011, 2012, 2013, 2014, 2015, 2016, and 2017 (which includes 2018).

This year I tried something new: I used Veeam instead of Windows Server Backup, and I restored what is now a Dell PowerEdge T30 to an off-site Lenovo TS140.

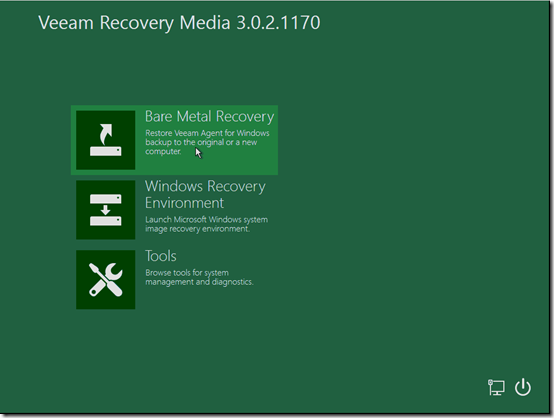

One nice thing about Veeam is that it lets you prepare a recovery ISO for each machine to use for bare-metal recovery. I’ve gotten in the habit of saving that ISO in the top-level folder where Veeam stores backups. So the first thing to do was burn that ISO to a CD. It helped to have an external USB CD drive, as the drive in the TS140 doesn’t seem to be working.

The TS140 was already set to allow legacy boot. Once I booted the TS140 from the CD, I chose Bare Metal Recovery:

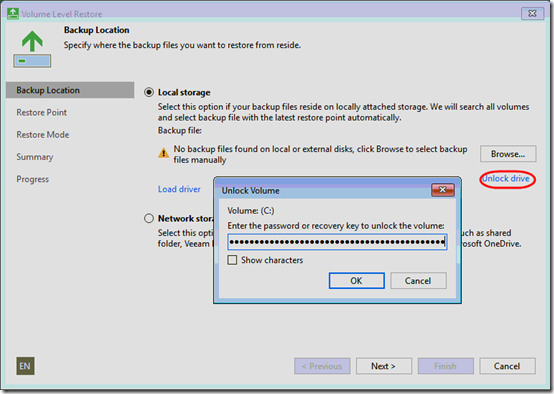

The first page of the recovery wizard asks for where to find the backup. Since my backup is on a BitLocker-protected external drive, I needed to click Unlock drive and provide the BitLocker key. Two notes:

- If the internal target drive is empty, the external drive shows up as C: at this point, so yes, you need to unlock C:

- The first time I tried, this, I was unable to type in the the recovery key field—it simply was not allowing keyboard input. (Keyboard input under Tools > Command Prompt worked.) I rebooted from the CD and then it worked.

I did get the message, “OS disk in backup uses GPD disk. This may cause boot issues on BIOS systems.” Maybe that means on “legacy BIOS” systems as opposed to UEFI? It did not cause an issue this time.

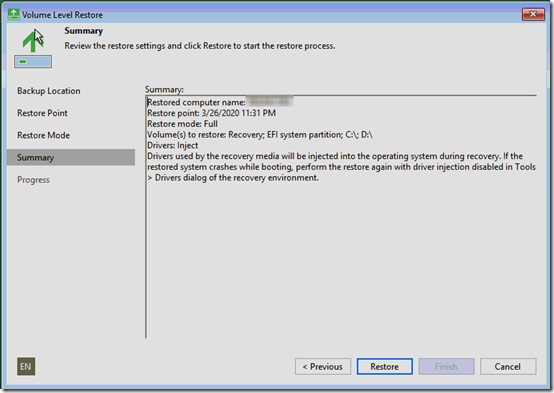

At first I left Restore Mode set to the default Entire computer, but that just said “Attempting to auto-match disks” and dropped me on the Summary page:

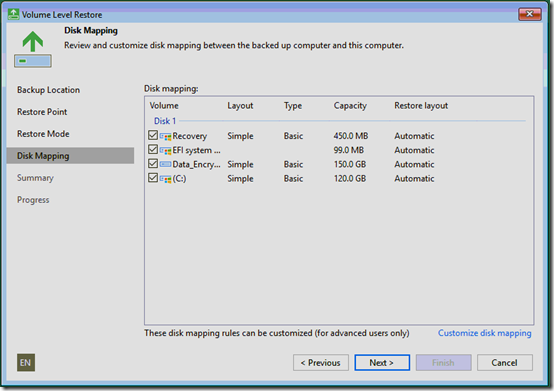

I don’t like not seeing where the restore will go, so I pressed Previous and set Restore to Manual restore (advanced). This enabled the Disk Mapping page, but it still didn’t show which target volume was selected. (Note that on the Restore Mode page, there is in fact a View automatically detected disk mapping link, but I missed that at first and it doesn’t let you change anything anyway.)

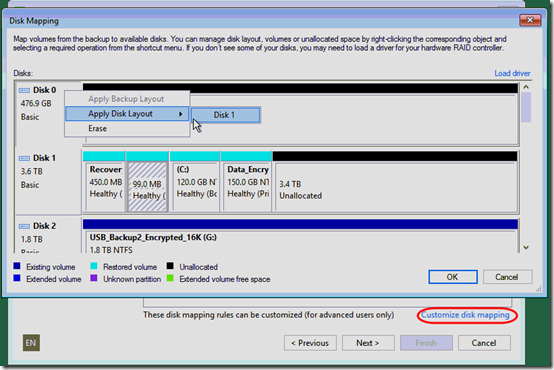

Finally I clicked Customize disk mapping and was able to see the layout:

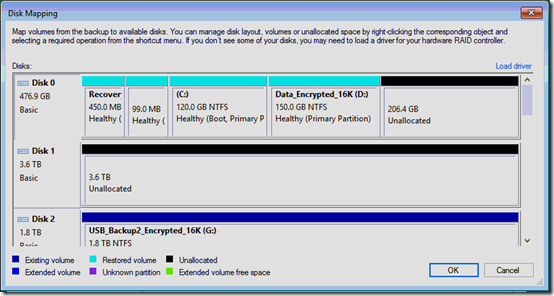

I saw that Veeam had chosen a 4TB internal HDD as the restore target—perhaps because it was bigger than the 1TB source HDD. However, the partitions that I wanted to restore were smaller than the internal SSD, so that’s what I wanted as the target. I had to right-click on Disk 0, the intended target, then choose Apply Disk Layout > Disk 1. This moved the target to Disk 0 (the SSD) and left Disk 1 alone:

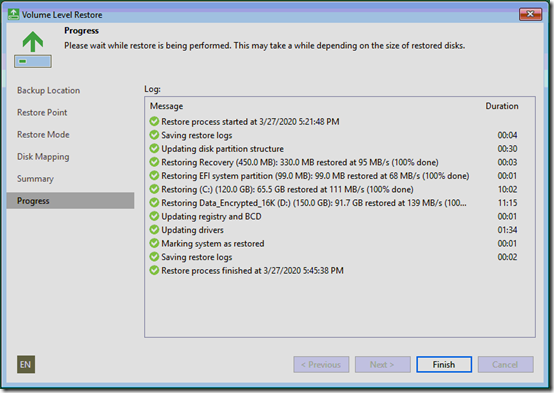

Finally I was ready to start the restore. Once started, it took less than half an hour to restore the full server (215GB) from an external USB3 drive to an internal SSD:

This machine is off site, so I won’t be able to test it on the actual network. In fact, before rebooting, I disconnected the network cable to keep it from causing issues on my office network.

The first boot went very quickly (maybe a minute), but I could not log on (“There are no logon servers available to service the logon request”). Then within a couple minutes, the machine rebooted on it own. This time, “Applying computer settings” took a few extra seconds, but then I was able to log on. I set a fixed IP address on my office network and since this is a domain controller, assigned the DNS server to be the same IP. Then I reconnected the network cable.

Within about a minute, I’d received about a dozen emails from my monitoring software about errors on the server (disk drives not found, ping checks failing, etc.). Oops—now I have the live server and a test server both reporting to the cloud-based monitoring software. I meant to disable that agent’s service on the test machine before re-connecting the network. After disabling it, conditions in the monitoring software returned to normal.

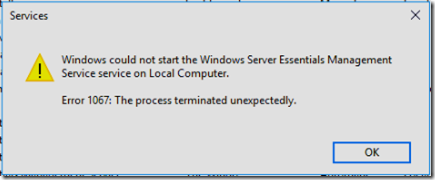

Working through some of the tests in the 2017 post, I did encounter one problem: the Windows Server Essentials Management Service would not start:

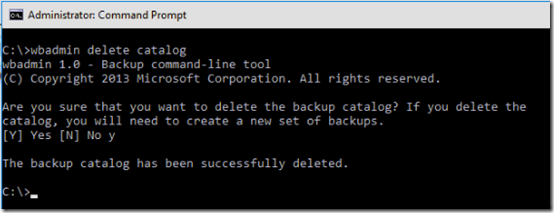

Following a comment in this thread, I confirmed that Windows Server Backup also would not start. Running wbadmin delete catalog at an Administrative command prompt fixed both problems:

I blogged about this as a separate issue last year.

One other issue I encountered was that the test server was unable to email the health report through the IIS 6 SMTP service running on the same machine: “550 5.7.1 Unable to relay for <email sender>.” It turns out that this was due to the test server having a new IP address that was not in the range of addresses that I had configured the SMTP service to relay. Once I added the new IP to the list of permitted relay senders, the email went through.

I started using Veem with one of my customers recently so that I could easily backup all five VM’s. I should go through this restore procedure next, thanx!