I have a Lenovo TS140 server running Intel Rapid Storage Technology enterprise version 4.3.1.1152. Due to an intermittent error telling me that a drive was offline then immediately back online, I opened the server an re-seated all the hard drive cables. When I got back to my office, I had an email from RSTe telling me that the 500GB RAID 1 volume was degraded.

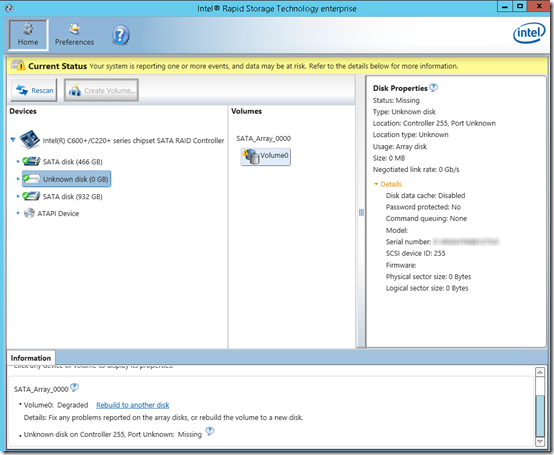

I logged on to the machine and viewed the Intel RSTe user interface. It looked like both disks in the RAID volume were there, but the second was shown as an “Unknown disk”. The serial number was shown, but the size was 0 MB and all other values were blank or 0:

The only available option was to Rebuild to another disk, which wanted to make my 1TB non-RAID internal drive (used to store backups) into the second drive in the array.

I described this situation to a Lenovo support engineer. He asked me to run the Lenovo server diagnostics under Windows. Not surprisingly, these diagnostics can only see logical drives. The diagnostics found no problems because the RAID 1 drive was operating correctly in its degraded (unmirrored) state.

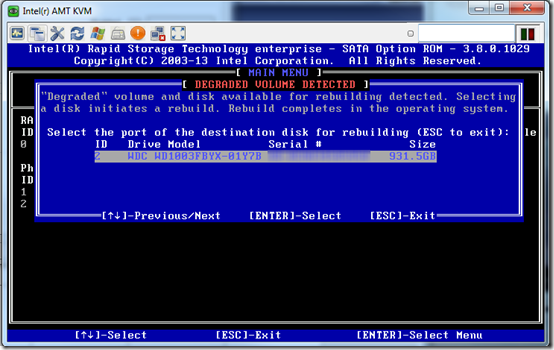

Next, I rebooted the server and used the out-of-band KVM feature of vPro to view the pre-boot RSTe screens. The first popup screen also offered the option of rebuilding to the 1TB drive. This is a pretty dangerous first option. It makes it sound like a good idea to wipe out an entire secondary disk (degraded volume found, disk available for rebuild):

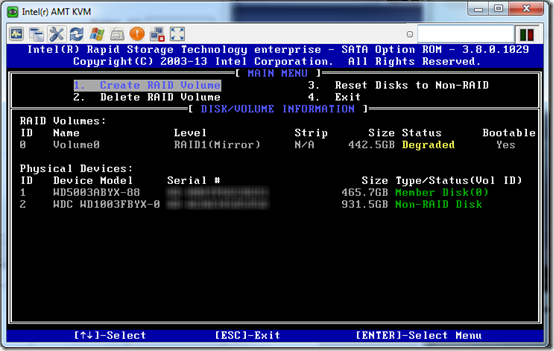

Fortunately I knew that I didn’t want to overwrite the 1TB drive, so I pressed Escape and got to the main screen. Here I was finally able to confirm that the controller was only seeing two of the three drives. The second 500GB was simply missing:

I went back on site and found that one of the SATA cables was not connected. Either I had failed to reconnect it, or had knocked it loose when putting the cover back on the case. Once I re-attached that cable and started the server, the controller saw the second 500GB drive and started rebuilding the RAID 1 array. (Since Windows had changed the first drive when it was booted by itself, the second drive no longer matched.) The rebuild caused a significant resource drain—it took Windows a really long time to boot and start services—but when the rebuild completed in about 90 minutes, performance was back to normal.

Next Time

Next time, realize that the misleading RSTe interface under Windows may be showing you the stored RAID configuration, not the actual drives that are present. Go to the pre-boot UI, press Escape on any pop-up that appears, then look at which drives are physically present.